1.The problem for contemporary blockchain information stack

There are a number of challenges {that a} trendy blockchain indexing startup could face, together with:

Huge quantities of knowledge. As the quantity of knowledge on the blockchain will increase, the information index might want to scale as much as deal with the elevated load and supply environment friendly entry to the information. Consequently, it results in increased storage prices, gradual metrics calculation, and elevated load on the database server.Complicated information processing pipeline. Blockchain expertise is complicated, and constructing a complete and dependable information index requires a deep understanding of the underlying information constructions and algorithms. The range of blockchain implementations inherits it. Given particular examples, NFTs in Ethereum are normally created inside sensible contracts following the ERC721 and ERC1155 codecs. In distinction, the implementation of these on Polkadot, as an example, is normally constructed immediately inside blockchain runtime. These must be thought of NFTs and must be saved as these.Integration capabilities. To offer most worth to customers, a blockchain indexing answer could must combine its information index with different methods, reminiscent of analytics platforms or APIs. That is difficult and requires vital effort positioned into the structure design.

As blockchain expertise has develop into extra widespread, the quantity of knowledge saved on the blockchain has elevated. It’s because extra persons are utilizing the expertise, and every transaction provides new information to the blockchain. Moreover, blockchain expertise has advanced from easy money-transferring functions, reminiscent of these involving using Bitcoin, to extra complicated functions involving the implementation of enterprise logic inside sensible contracts. These sensible contracts can generate giant quantities of knowledge, contributing to the elevated complexity and measurement of the blockchain. Over time, this has led to a bigger and extra complicated blockchain.

On this article, we evaluation the evolution of Footprint Analytics’ expertise structure in levels as a case research to discover how the Iceberg-Trino expertise stack addresses the challenges of on-chain information.

Footprint Analytics has listed about 22 public blockchain information, and 17 NFT market, 1900 GameFi mission, and over 100,000 NFT collections right into a semantic abstraction information layer. It’s probably the most complete blockchain information warehouse answer on the earth.

No matter blockchain information, which incorporates over 20 billions rows of information of monetary transactions, which information analysts often question. it’s totally different from ingression logs in conventional information warehouses.

We’ve skilled 3 main upgrades prior to now a number of months to fulfill the rising enterprise necessities:

2. Structure 1.0 Bigquery

At first of Footprint Analytics, we used Google Bigquery as our storage and question engine; Bigquery is a superb product. It’s blazingly quick, simple to make use of, and supplies dynamic arithmetic energy and a versatile UDF syntax that helps us shortly get the job performed.

Nonetheless, Bigquery additionally has a number of issues.

Information just isn’t compressed, leading to excessive prices, particularly when storing uncooked information of over 22 blockchains of Footprint Analytics.Inadequate concurrency: Bigquery solely helps 100 simultaneous queries, which is unsuitable for prime concurrency situations for Footprint Analytics when serving many analysts and customers.Lock in with Google Bigquery, which is a closed-source product。

So we determined to discover different various architectures.

3. Structure 2.0 OLAP

We have been very focused on among the OLAP merchandise which had develop into highly regarded. Probably the most engaging benefit of OLAP is its question response time, which generally takes sub-seconds to return question outcomes for large quantities of knowledge, and it will probably additionally help 1000’s of concurrent queries.

We picked top-of-the-line OLAP databases, Doris, to provide it a strive. This engine performs properly. Nonetheless, in some unspecified time in the future we quickly bumped into another points:

Information varieties reminiscent of Array or JSON usually are not but supported (Nov, 2022). Arrays are a typical kind of knowledge in some blockchains. As an example, the subject discipline in evm logs. Unable to compute on Array immediately impacts our capacity to compute many enterprise metrics.Restricted help for DBT, and for merge statements. These are widespread necessities for information engineers for ETL/ELT situations the place we have to replace some newly listed information.

That being mentioned, we couldn’t use Doris for our entire information pipeline on manufacturing, so we tried to make use of Doris as an OLAP database to unravel a part of our drawback within the information manufacturing pipeline, performing as a question engine and offering quick and extremely concurrent question capabilities.

Sadly, we couldn’t substitute Bigquery with Doris, so we needed to periodically synchronize information from Bigquery to Doris utilizing it as a question engine. This synchronization course of had a number of points, one in every of which was that the replace writes received piled up shortly when the OLAP engine was busy serving queries to the front-end purchasers. Subsequently, the pace of the writing course of received affected, and synchronization took for much longer and generally even turned not possible to complete.

We realized that the OLAP might resolve a number of points we face and couldn’t develop into the turnkey answer of Footprint Analytics, particularly for the information processing pipeline. Our drawback is greater and extra complicated, and lets say OLAP as a question engine alone was not sufficient for us.

4. Structure 3.0 Iceberg + Trino

Welcome to Footprint Analytics structure 3.0, an entire overhaul of the underlying structure. We’ve redesigned your entire structure from the bottom as much as separate the storage, computation and question of knowledge into three totally different items. Taking classes from the 2 earlier architectures of Footprint Analytics and studying from the expertise of different profitable large information initiatives like Uber, Netflix, and Databricks.

4.1. Introduction of the information lake

We first turned our consideration to information lake, a brand new kind of knowledge storage for each structured and unstructured information. Information lake is ideal for on-chain information storage because the codecs of on-chain information vary extensively from unstructured uncooked information to structured abstraction information Footprint Analytics is well-known for. We anticipated to make use of information lake to unravel the issue of knowledge storage, and ideally it will additionally help mainstream compute engines reminiscent of Spark and Flink, in order that it wouldn’t be a ache to combine with several types of processing engines as Footprint Analytics evolves.

Iceberg integrates very properly with Spark, Flink, Trino and different computational engines, and we will select probably the most acceptable computation for every of our metrics. For instance:

For these requiring complicated computational logic, Spark would be the alternative.Flink for real-time computation.For easy ETL duties that may be carried out utilizing SQL, we use Trino.

4.2. Question engine

With Iceberg fixing the storage and computation issues, we had to consider selecting a question engine. There usually are not many choices obtainable. The options we thought of have been

Crucial factor we thought of earlier than going deeper was that the long run question engine needed to be suitable with our present structure.

To help Bigquery as a Information SourceTo help DBT, on which we rely for a lot of metrics to be producedTo help the BI device metabase

Based mostly on the above, we selected Trino, which has excellent help for Iceberg and the group have been so responsive that we raised a bug, which was fastened the following day and launched to the most recent model the next week. This was the only option for the Footprint group, who additionally requires excessive implementation responsiveness.

4.3. Efficiency testing

As soon as we had selected our course, we did a efficiency check on the Trino + Iceberg mixture to see if it might meet our wants and to our shock, the queries have been extremely quick.

Understanding that Presto + Hive has been the worst comparator for years in all of the OLAP hype, the mixture of Trino + Iceberg fully blew our minds.

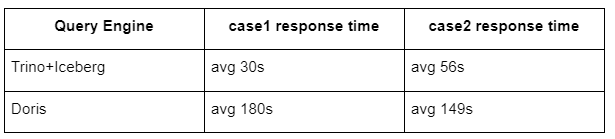

Listed here are the outcomes of our exams.

case 1: be part of a big dataset

An 800 GB table1 joins one other 50 GB table2 and does complicated enterprise calculations

case2: use a giant single desk to do a definite question

Take a look at sql: choose distinct(tackle) from the desk group by day

The Trino+Iceberg mixture is about 3 instances quicker than Doris in the identical configuration.

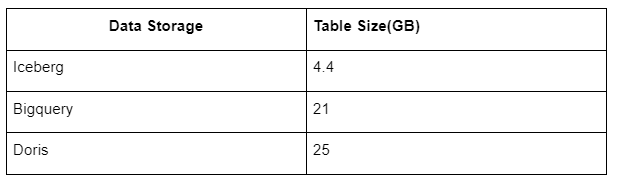

As well as, there may be one other shock as a result of Iceberg can use information codecs reminiscent of Parquet, ORC, and many others., which can compress and retailer the information. Iceberg’s desk storage takes solely about 1/5 of the area of different information warehouses The storage measurement of the identical desk within the three databases is as follows:

Word: The above exams are examples now we have encountered in precise manufacturing and are for reference solely.

4.4. Improve impact

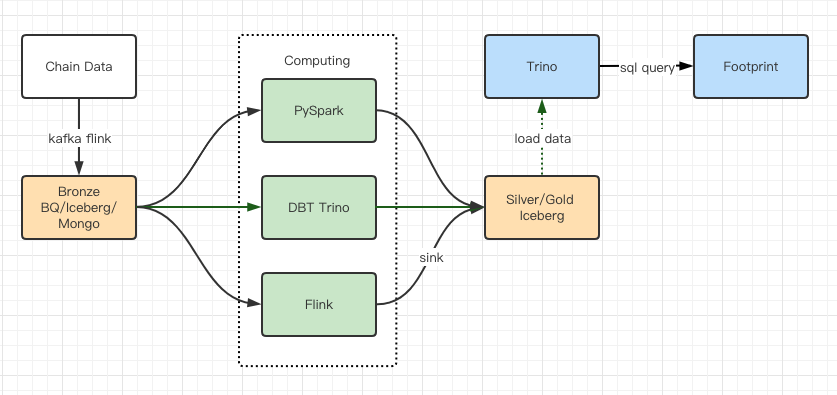

The efficiency check studies gave us sufficient efficiency that it took our group about 2 months to finish the migration, and it is a diagram of our structure after the improve.

A number of pc engines match our varied wants.Trino helps DBT, and may question Iceberg immediately, so we not must cope with information synchronization.The superb efficiency of Trino + Iceberg permits us to open up all Bronze information (uncooked information) to our customers.

A number of pc engines match our varied wants.Trino helps DBT, and may question Iceberg immediately, so we not must cope with information synchronization.The superb efficiency of Trino + Iceberg permits us to open up all Bronze information (uncooked information) to our customers.

5. Abstract

Since its launch in August 2021, Footprint Analytics group has accomplished three architectural upgrades in lower than a yr and a half, because of its robust need and willpower to convey the advantages of the most effective database expertise to its crypto customers and stable execution on implementing and upgrading its underlying infrastructure and structure.

The Footprint Analytics structure improve 3.0 has purchased a brand new expertise to its customers, permitting customers from totally different backgrounds to get insights in additional numerous utilization and functions:

Constructed with the Metabase BI device, Footprint facilitates analysts to realize entry to decoded on-chain information, discover with full freedom of alternative of instruments (no-code or hardcord), question total historical past, and cross-examine datasets, to get insights in no-time.Combine each on-chain and off-chain information to evaluation throughout web2 + web3;By constructing / question metrics on high of Footprint’s enterprise abstraction, analysts or builders save time on 80% of repetitive information processing work and give attention to significant metrics, analysis, and product options primarily based on their enterprise.Seamless expertise from Footprint Internet to REST API calls, all primarily based on SQLReal-time alerts and actionable notifications on key indicators to help funding choices