[ad_1]

In 1999, the world’s first commercially out there coloration video and digital camera telephone arrived within the type of the Kyocera VP-210 in Japan. A 12 months after its launch, worries over the fast rise in “up-skirt” voyeurism the telephones enabled unfold rapidly all through the nation, prompting wi-fi carriers to institute a coverage guaranteeing the telephones they provided would function a loud digital camera shutter noise that customers couldn’t disable. The effectiveness of that measure is, to this present day, up for debate. However the occasion stays a invaluable historical past lesson on the widespread adoption of expertise: new instruments make doing every thing simpler, and never simply the great things.

Immediate-based AI artwork mills are actually having their VP-210 second. As soon as packages like Midjourney, DALL E, and Secure Diffusion started proliferating in the summertime of 2022, it was solely a matter of time earlier than customers began creating and iterating on hypersexualized and stereotyped photos of girls to populate social media accounts and promote as NFTs. However AI’s detractors must keep away from conflating the tech’s inherent potential and worth with its gross misuse — even when these misuses are blatantly sexist.

Likewise, if its proponents need to defend the AI artwork revolution, they should make it clear that they’re able to have this dialog and work towards options. In the event that they don’t, the motion will rightfully lose its credibility, and the maligned tech will face a good steeper uphill battle than it already does.

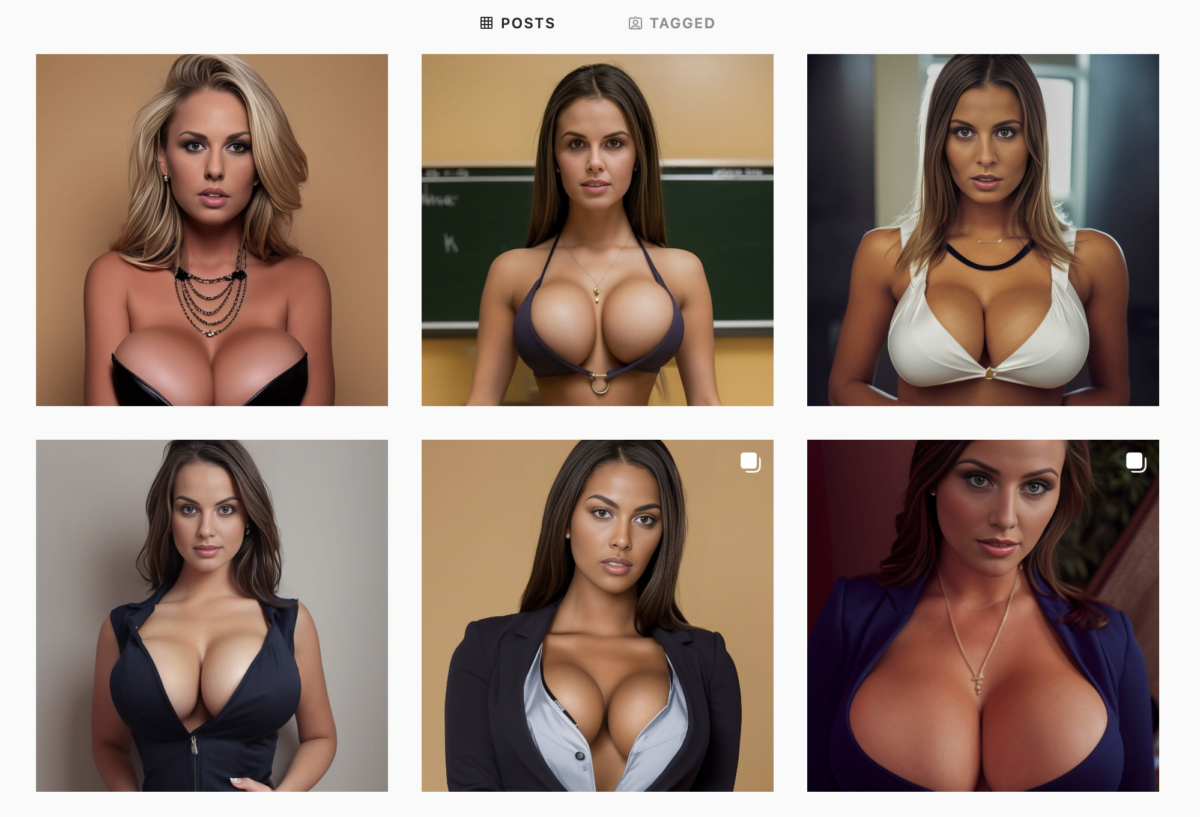

Social media has lengthy held a popularity for catalyzing low physique satisfaction and vanity in girls particularly. Misuse of AI artwork instruments has the potential to exacerbate this downside. A fast search on the preferred social media platforms (for phrases we’re selecting to not reveal right here to keep away from encouraging their use) returns quite a lot of accounts devoted to displaying AI creations depicting photorealistic girls (those displaying males are comparatively few and much between).

The pictures in these accounts vary from tasteful and inventive to outright grotesque and pornographic. Most fall into the latter class, presenting portrayals of girls that stray up to now into objectification as to succeed in parody. Whereas we’re together with photos from an assortment of accounts of this nature on this article, we’ve determined to not embody figuring out details about them to keep away from giving a platform to those that, in keeping with knowledgeable consensus, promote stereotypes and trigger hurt.

Whereas well-intended content material insurance policies from packages like DALL-E and Midjourney stop customers from together with “grownup content material” and “gore” of their immediate craft, the close to limitless flexibility of language permits customers to avoid a lot of those insurance policies’ limitations with some ease. The truth that these AI techniques have inherent biases in opposition to girls constructed into them has solely made this phenomenon worse. There’s additionally nothing stopping people from constructing and coaching their very own AI fashions, releasing them from such restrictions altogether.

Distilling the objectification of girls

The conversations surrounding the objectification-empowerment dynamic of girls within the vogue, leisure, and porn industries are complicated, nuanced, and important ones, however all of them revolve across the company and dignity of human beings. What makes the reductive and debased photos of AI-generated girls really feel so sinister shouldn’t be dissimilar from what makes deepfakes so reprehensible: the tech strips away these pesky ethical hangups concerning consent and character and distills the very essence of sexual objectification into its purest kind.

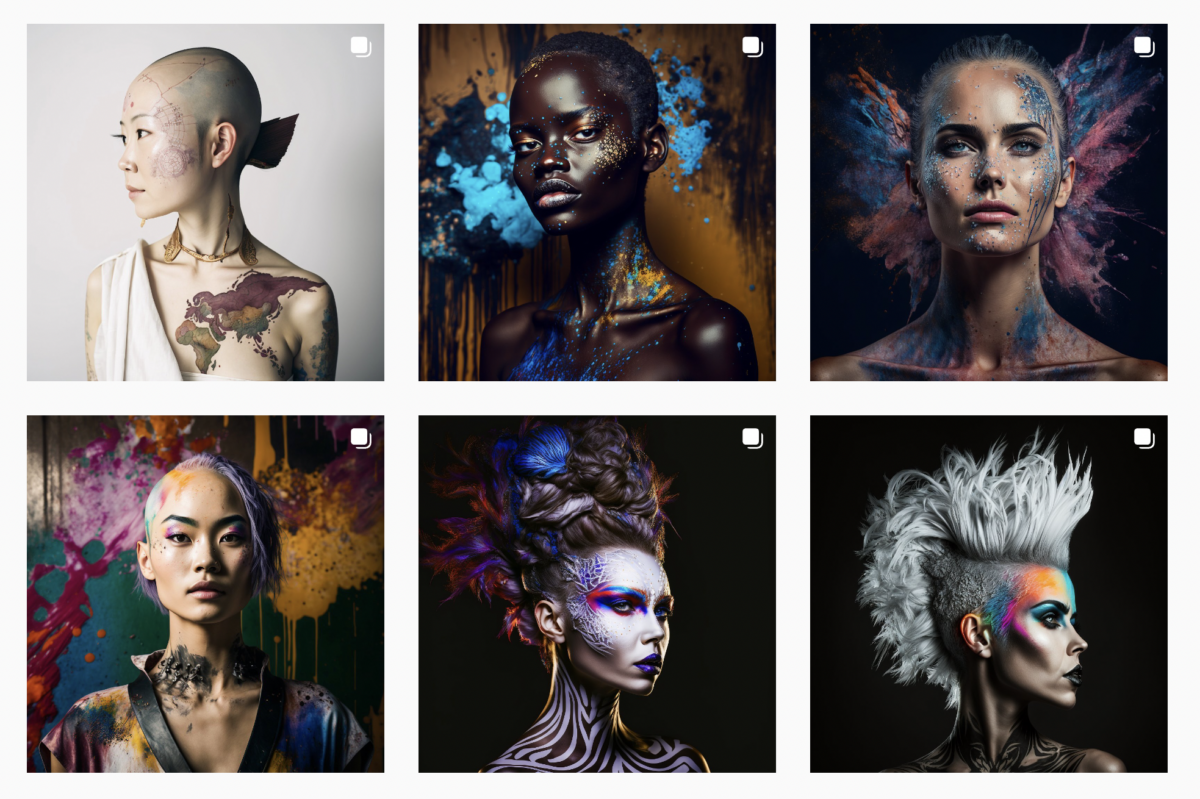

On a broad scale, photos produced by AI artwork instruments that depict folks usually mix the intimate and the alien. Some would argue that that is even part of their attraction, a capability to each highlight and subvert the uncanny valley in actually inventive and thought-provoking methods.

The identical can’t be mentioned of the AI-generated girls so usually seen in accounts on Twitter and Instagram. They’re way more unsettling representations, not simply because they’ve emerged from algorithms educated on unknown billions of photos of real-life girls, however as a result of the pictures are constructed by means of the prompt-based specification of sexually-associated components of girls. The result’s an odd, echoing specter of numerous countenances and our bodies synthesized right into a masquerade of actuality.

Can AI artwork instruments have a good time girls as a substitute?

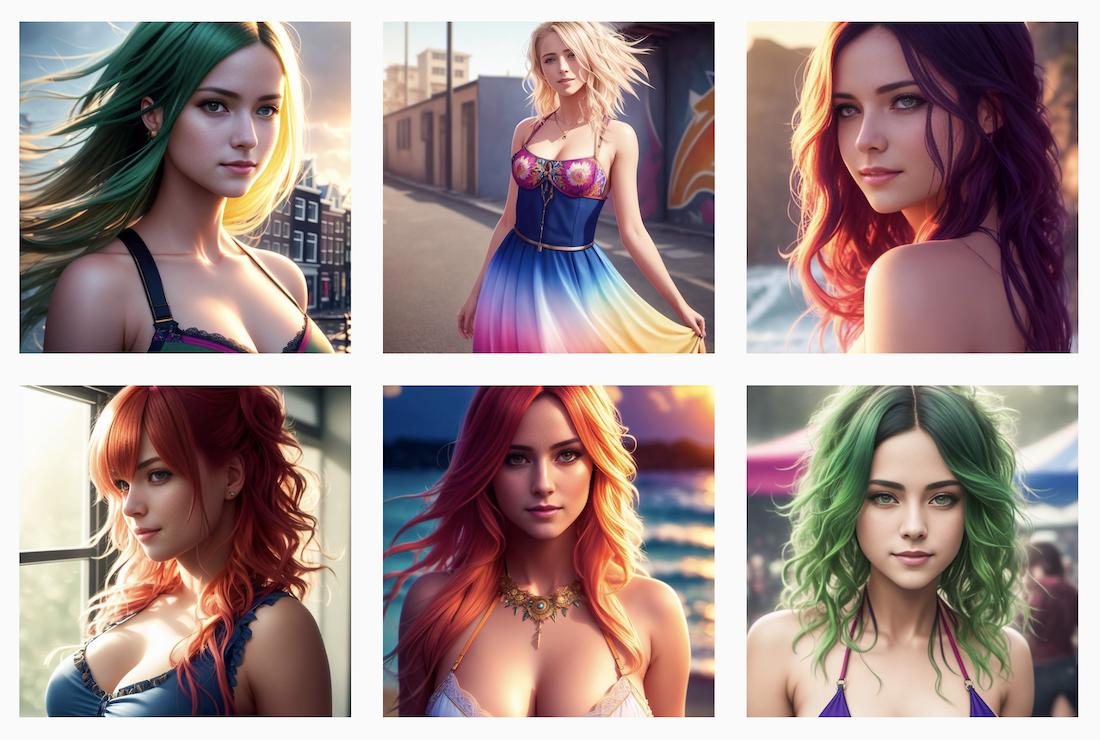

The road between objectifying and empowering blurs rapidly. A number of social media accounts that includes AI-generated content material exist which can be operated by girls, for instance, and declare to have a good time them of their variety, magnificence, and cultural contexts. Reasonably than creating and posting caricatures which were diminished to little greater than their sexual organs, accounts like these are inclined to current girls from the shoulders up and resemble one thing extra akin to precise human beings. That is progress of a sort, however even on such pages, a lot of the girls depicted have strikingly comparable bone constructions and physique sorts to at least one one other, not dissimilar to the so-called “same-face syndrome” phenomenon that has plagued Disney productions for years.

Nonetheless, these variations in diploma matter. An growing variety of NFT collections are starting to make use of AI of their creation, for instance, and many who signify girls are arguably doing so in a respectful and revolutionary approach. Musess is one such assortment, having constructed its NFTs from AI’s interpretation of artist Eva Adamian’s work of the nude feminine kind. The gathering, the mission web site states, was born of the will to create one thing that confirmed the “borderless, inclusive, and unveiled magnificence” of girls.

This isn’t a brand new downside

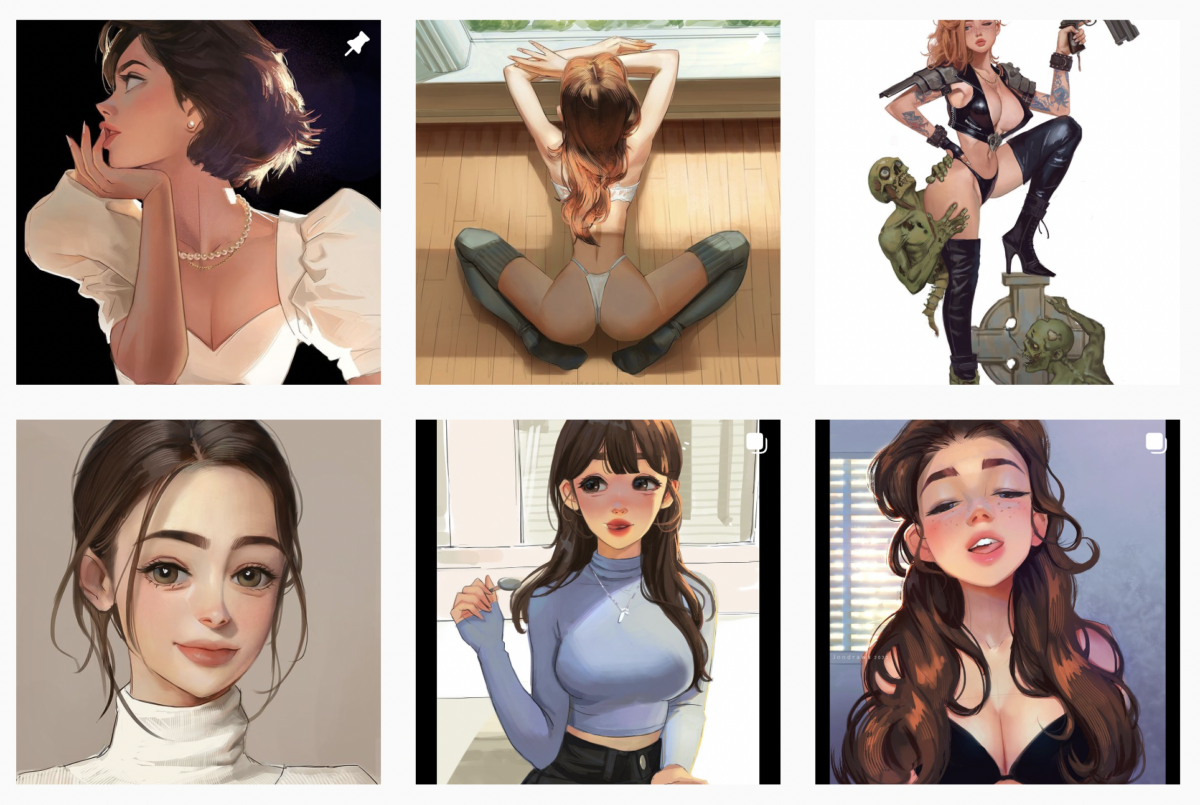

Girls being objectified in inventive media is nothing new; AI has merely made it simpler to realize. That is illustrated by the truth that Instagram’s algorithm rapidly directs you from accounts depicting AI-generated girls to others of the same nature — solely the ladies on these pages have been hand drawn by graphic illustrators. Aside from the stylistic variations, there’s little to distinguish the 2 in how they understand and current girls. Simply as AI artwork is artwork in a distinct expression, objectification is objectification, whatever the medium by which it’s expressed.

AI’s advocates want to guide the cost for change

Each expertise used to create artwork previously has additionally been utilized to depict girls in a spectrum starting from dignified to one-dimensional. AI is now the newest innovation for use on this approach. Sarcastically, the arrival of the complicated and mandatory dialog surrounding AI’s position within the objectification of girls is an indication of progress. Basically, the difficulty behind folks utilizing the tech to scale back girls to their sexuality alone isn’t any totally different than it all the time has been.

Supporters of AI and AI artwork instruments must maintain this in thoughts. A typical argument amongst proponents asserts that, whereas these instruments are sadly getting used to plagiarize artists’ work and imaginative and prescient, that is no cause to disqualify the expertise outright or deny the great it’s doing on the earth. Absolutely, the dissemination of instruments that creatively empower billions globally should validate their existence, proper?

The reply is that they do. However the different query is that this: Will AI’s supporters embrace the validity of issues which have much less to do with ethics within the artwork world and extra with human dignity and expressions of sexism?

AI artwork instruments are going to undergo a gauntlet of criticism — each authentic and hole — earlier than they arrive out the opposite finish flush with each different piece of expertise we use in our each day lives. Till then, they should climate the storm, even when that storm features a proliferation of sexist photos made potential as a direct results of the instruments. The Kyocera VP-210 and each telephone that got here after it made it simpler to take images of girls with out their consent. Any affordable particular person can and will really feel disturbed by that reality. However such deplorable habits represents a poor knock-down argument in opposition to the thought of the digital camera telephone itself. As with each expertise, society must discover a option to transfer ahead with AI whereas working to attenuate its misuse as a lot as potential. Now could be the time for AI artwork proponents to guide that cost.

[ad_2]

Source link