[ad_1]

The Way forward for Privateness Discussion board revealed a framework for biometric information rules for immersive applied sciences on Tuesday.

The FPF’s Threat Framework for Physique-Associated Knowledge in Immersive Applied sciences report discusses finest practices for accumulating, utilizing, and transferring body-related information throughout entities.

#NEW: @futureofprivacy releases its ‘Threat Framework for Physique-Associated Knowledge in Immersive Applied sciences’ by authors @spivackjameson & @DanielBerrick.

This evaluation assists organizations to make sure they’re dealing with body-related information safely & responsibly.https://t.co/FC1VOsaAFe

— Way forward for Privateness Discussion board (@futureofprivacy) December 12, 2023

Organisations, companies, and people can incorporate the FPF’s observations as suggestions and a basis for facilitating protected, accountable prolonged actuality (XR) insurance policies. This pertains to entities requiring massive quantities of biometric information in immersive applied sciences.

Moreover, these following the rules of the report can apply the framework to doc causes and methodologies for dealing with biometric information, adjust to legal guidelines and requirements, consider dangers related to privateness and security, and moral issues when accumulating information from gadgets.

The framework applies not solely to XR-related organisations but additionally to any establishment leveraging applied sciences depending on the processing of biometrics.

Jameson Spivack, Senior Coverage Analyst, Immersive Applied sciences, and Daniel Berrick, Coverage Counsel, co-authored the report.

Your Knowledge: Dealt with with Care

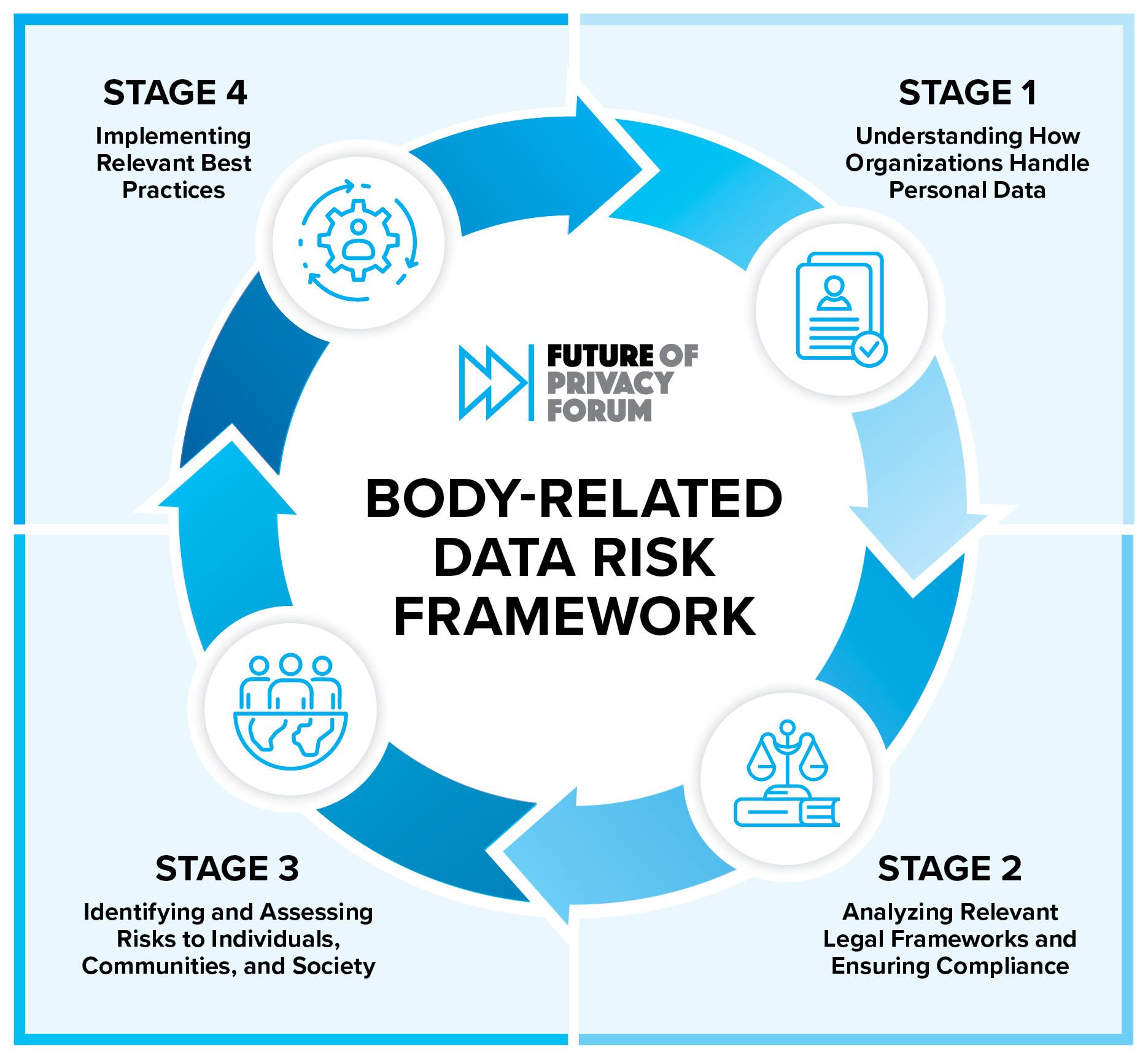

With the intention to perceive how one can deal with private information, organisations should determine potential privateness dangers, guarantee compliance with legal guidelines, and implement finest practices to spice up security and privateness, the FPF defined.

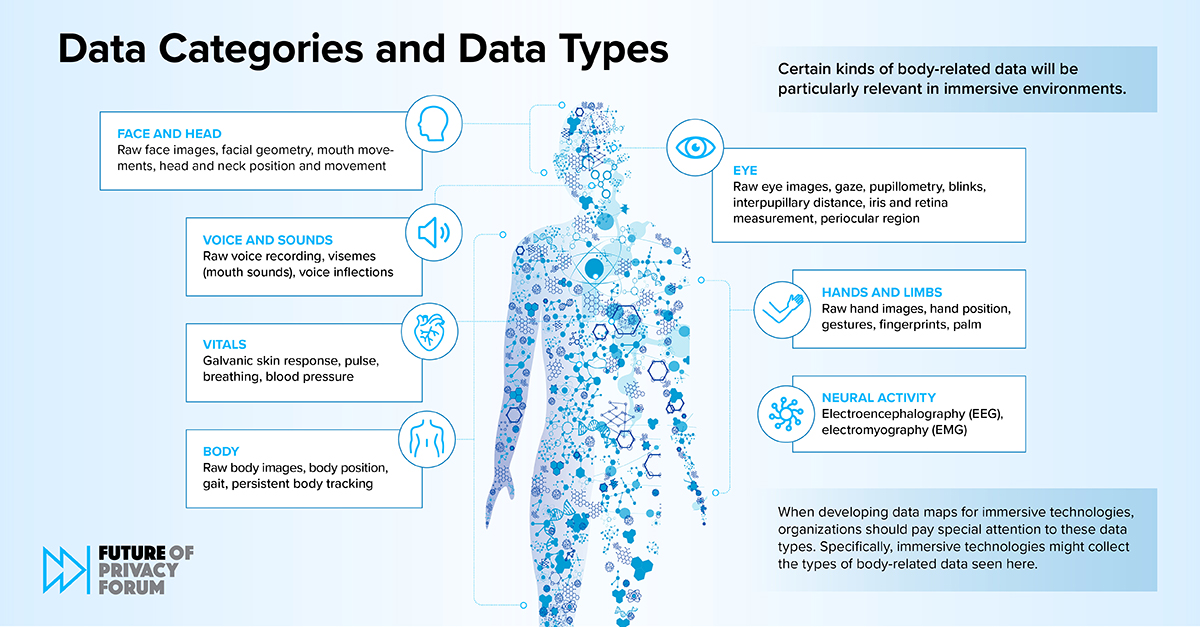

Based on Stage One of many framework, organisations can achieve this by:

Creating information maps that define their information practices linked to biometric info

Documenting their use of information and practices

Figuring out pertinent stakeholders, direct and third-party, affected by the organisation’s information practices

Corporations would analyse relevant authorized frameworks in Stage Two to make sure compliance. This might contain firms accumulating, utilizing, or transferring “body-related information” impacted by US privateness legal guidelines.

To conform, the framework recommends that organisations “perceive the person rights and enterprise obligations” relevant to “current complete and sectoral privateness legal guidelines,” it learn.

Organisations must also analyse rising legal guidelines and rules and the way they’d influence “body-based information practices.”

In Stage Three, firms, organisations, and establishments ought to determine and assess dangers to others. It defined that this contains the people, communities, and societies they serve.

It mentioned that privateness dangers and harms may derive from information “used or dealt with specifically methods, or transferred to specific events,” it mentioned.

It added that authorized compliance “might not be sufficient to mitigate dangers.”

With the intention to maximise security, firms can observe a number of steps to guard information, equivalent to proactively figuring out and lowering dangers related to information practices.

This might contain impacts on the next:

Identifiability

Use to make key selections

Sensitivity

Companions and different third-party teams

The potential for inferences

Knowledge retention

Knowledge accuracy and bias

Consumer expectations and understanding

After evaluating a gaggle’s information use coverage, organisations can assess the equity and ethics behind its information practices, based mostly on recognized dangers, it defined.

Lastly, the FPF framework really helpful the implementation of finest practices in Stage 4, which concerned a “variety of authorized, technical, and coverage safeguards organisations can use.

It added this could assist organisations preserve up to date with “statutory and regulatory compliance, reduce privateness dangers, and make sure that immersive applied sciences are used pretty, ethically, and responsibly.”

The framework recommends that organisations deliberately implement finest practices by comprehensively “touching all components of the information lifecycle and addressing all related dangers.”

Organisations may also collaboratively implement finest practices utilizing these “developed in session with multidisciplinary groups inside a corporation.”

These would contain authorized product, engineering, belief, security, and privacy-related stakeholders.

Organisations can shield their information by:

Localising and processing information on gadgets and storage

Minimising information footprints

Regulating or implementing third-party administration

Providing significant discover and consent

Preserving information integrity

Offering consumer controls

Incorporating privacy-enhancing applied sciences

Following these finest practices, organisations may consider finest practices and suitably align them as a coherent technique. Afterwards, they may assess the most effective practices on an ongoing foundation to keep up efficacy.

EU Proceeds with Synthetic Intelligence (AI) Act

The information comes proper after the European Union moved ahead with its AI Act, which the FPF states can have a “broad extraterritorial influence.”

At present below negotiations with member-states, the laws goals to guard residents from dangerous and unethical use of AI-based options.

Political settlement was reached on the EU’s #AIAct, which can have a broad extraterritorial influence. If you want to realize insights into key authorized implications of the regulation, be part of @kate_deme for an in-depth FPF coaching tomorrow at 11 am ET.: https://t.co/weVgDdsvRh

— Way forward for Privateness Discussion board (@futureofprivacy) December 11, 2023

The organisation is providing steerage, experience, and coaching for firms after the Act prepares to enter pressure. This has led to one of many greatest adjustments in information privateness coverage for the reason that introduction of the Basic Knowledge Safety Regulation (GDPR) in Might 2016.

The European Fee said it needs to “regulate synthetic intelligence (AI)” to make sure improved situations for utilizing and rolling out the expertise.

It mentioned in an announcement,

“In April 2021, the European Fee proposed the primary EU regulatory framework for AI. It says that AI methods that can be utilized in numerous purposes are analysed and labeled in line with the danger they pose to customers. The totally different threat ranges will imply kind of regulation. As soon as authorized, these would be the world’s first guidelines on AI”

Based on the Fee, it goals to approve the Act by the tip of the 12 months.

Biden-Harris Govt Order on AI

In late October, the Biden-Harris administration carried out an govt order on the regulation of AI. The Authorities’s Govt Order on Secure, Safe, and Reliable Synthetic Intelligence goals to safeguard residents all over the world from the dangerous results of AI programmes.

Enterprises, organisations, and specialists might want to adjust to the brand new rules for “builders of probably the most highly effective AI methods” to share their security assessments with the US Authorities.

Responding to the Plan, the FPF mentioned it was “extremely complete” and supplied a “entire of presidency strategy and with an influence past authorities businesses.”

It continued in its official assertion,

“Though the chief order focuses on the federal government’s use of AI, the affect on the non-public sector shall be profound as a result of in depth necessities for presidency distributors, employee surveillance, schooling and housing priorities, the event of requirements to conduct threat assessments and mitigate bias, the investments in privateness enhancing applied sciences, and extra”

The assertion additionally known as on lawmakers to implement “bipartisan privateness laws.” Doing so was “crucial precursor for protections for AI that influence weak populations.”

UK Hosts AI Safety Summit

Moreover, the UK additionally hosted its AI Safety Summit on the iconic Bletchley Park, the place world-renowned scientist Alan Turing cracked the Nazi’s World Conflict II-era Enigma cryptography.

On the world-class occasion, a few of the business’s top-level specialists, executives, firms, and organisations gathered to stipulate protections to manage AI.

This has included the US, UK, EU, and UN governments, the Alan Turing Institute, The Way forward for Life Institute, Tesla, OpenAI, and plenty of others. The teams mentioned strategies to create a shared understanding of the dangers of AI, collaborate on finest practices, and develop a framework for AI security analysis.

The Struggle for Knowledge Rights

The information comes as a number of organisations enter recent alliances to be able to sort out ongoing considerations over the usage of digital, augmented, and blended actuality (VR/AR/MR), AI, and different rising applied sciences.

For instance, Meta Platforms and IBM launched an enormous alliance united to develop finest practices for synthetic intelligence, biometric information, and to assist create regulatory frameworks for tech firms worldwide.

The World AI Alliance hosts greater than 30 organisations, firms, and people from throughout the worldwide tech group and contains tech giants equivalent to AMD, HuggingFace, CERN, The Linux Basis, and others.

Moreover, organisations just like the Washington, DC-based XR Affiliation, Europe’s XR4Europe alliance, the globally-recognised Metaverse Requirements Discussion board, and the Gatherverse, amongst others, have contributed enormously to the implementation of finest practices for these concerned in constructing the way forward for spatial applied sciences.

[ad_2]

Source link